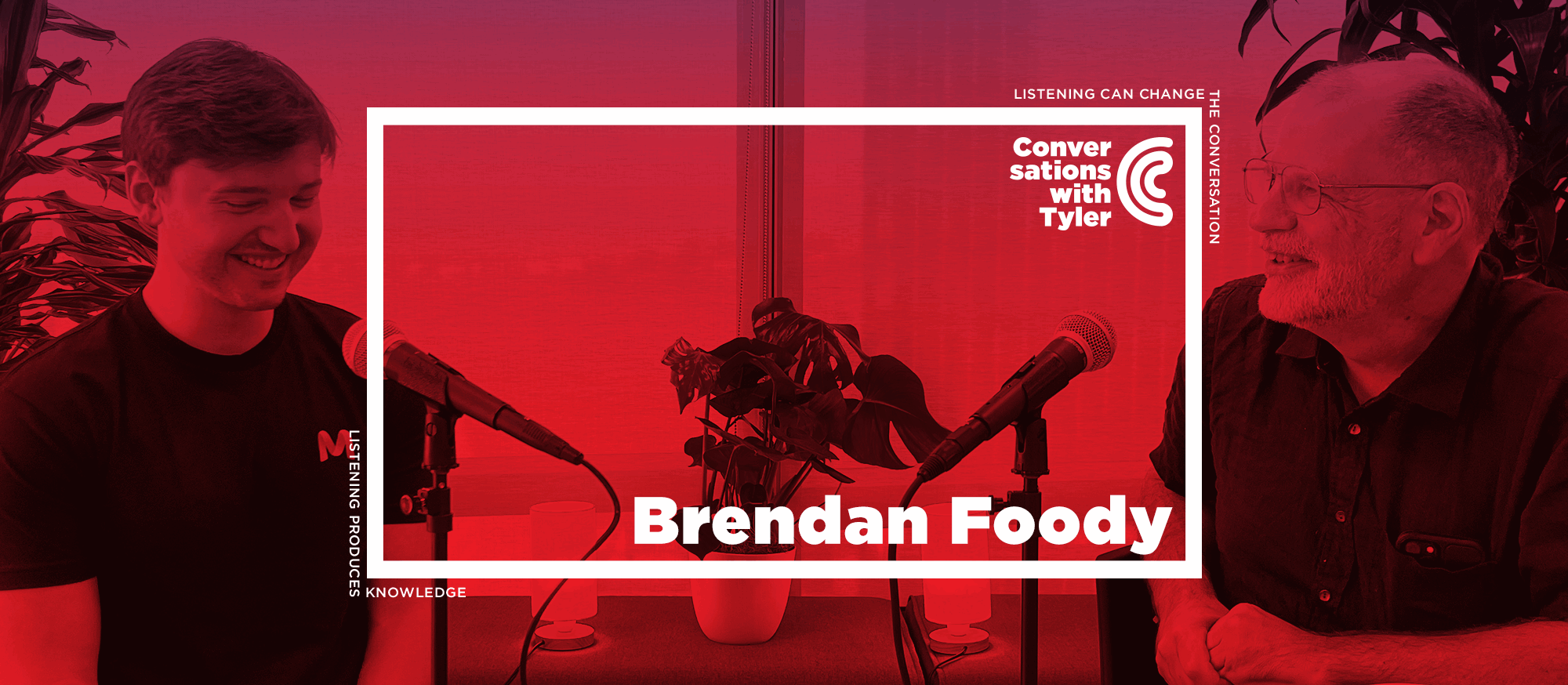

At 22, Brendan Foody is both the youngest Conversations with Tyler guest ever and the youngest unicorn founder on record. His company Mercor hires the experts who train frontier AI models—from poets grading verse to economists building evaluation frameworks—and has become one of the fastest-growing startups in history.

Tyler and Brendan discuss why Mercor pays poets $150 an hour, why AI labs need rubrics more than raw text, whether we should enshrine the aesthetic standards of past eras rather than current ones, how quickly models are improving at economically valuable tasks, how long until AI can stump Cass Sunstein, the coming shift toward knowledge workers building RL environments instead of doing repetitive analysis, how to interview without falling for vibes, why nepotism might make a comeback as AI optimizes everyone’s cover letters, scaling the Thiel Fellowship 100,000X, what his 8th-grade donut empire taught him about driving out competition, the link between dyslexia and entrepreneurship, dining out and dating in San Francisco, Mercor’s next steps, and more.

Watch the full conversation

Recorded October 16th, 2025.

Thanks to a listener for sponsoring this transcript in appreciation of his father, Gil Kemp.

TYLER COWEN: Hello everyone, and welcome back to Conversations with Tyler. Today, I’m sitting here, chatting with Brendan Foody at the offices of Mercor.

Mercor is an AI company — we’ll get into more detail soon enough — which dates from early 2023. Brendan is the CEO and co-founder. I believe he’s the youngest unicorn founder ever. Mercor, by some estimates, is the fastest-growing company ever, for instance, the quickest speed to $400 million. Brendan also, at age 22, is the youngest Conversations with Tyler guest ever.

BRENDAN FOODY: My proudest achievement.

[laughter]

COWEN: There’s more we’ll get to soon enough, but Brendan, welcome.

FOODY: Thank you so much for having me, Tyler. Excited to be here.

COWEN: Now, I saw an ad online not too long ago from Mercor, and it said $150 an hour for a poet. Why would you pay a poet $150 an hour?

FOODY: That’s a phenomenal place to start. For background on what the company does — we hire all of the experts that teach the leading AI models. When one of the AI labs wants to teach their models how to be better at poetry, we’ll find some of the best poets in the world that can help to measure success via creating evals and examples of how the model should behave.

One of the reasons that we’re able to pay so well to attract the best talent is that when we have these phenomenal poets that teach the models how to do things once, they’re then able to apply those skills and that knowledge across billions of users, hence allowing us to pay $150 an hour for some of the best poets in the world.

COWEN: The poets grade the poetry of the models or they grade the writing? What is it they’re grading?

FOODY: It could be some combination depending on the project. An example might be similar to how a professor in English class would create a rubric to grade an essay or a poem that they might have for the students. We could have a poet that creates a rubric to grade how well is the model creating whatever poetry you would like, and a response that would be desirable to a given user.

COWEN: How do you know when you have a good poet, or a great poet?

FOODY: That’s so much of the challenge of it, especially with these very subjective domains in the liberal arts. So much of it is this question of taste, where you want some degree of consensus of different exceptional people believing that they’re each doing a good job, but you probably don’t want too much consensus because you also want to get all of these edge case scenarios of what are the models doing that might deviate a little bit from what the norm is.

COWEN: So, you want your poet graders to disagree with each other some amount.

FOODY: Some amount, exactly, but still a response that is conducive with what most users would want to see in their model responses.

COWEN: Are you ever tempted to ask the AI models, “How good are the poet graders?”

[laughter]

FOODY: We often are. We do a lot of this. It’s where we’ll have the humans create a rubric or some eval to measure success, and then have the models say their perspective. You actually can get a little bit of signal from that, especially if you have an expert — we have tens of thousands of people that are working on our platform at any given time. Oftentimes, there’ll be someone that is tired or not putting a lot of effort into their work, and the models are able to help us with catching that.

COWEN: You’ve had a recent project lately. You hired Larry Summers, I believe, for finance and economics.

FOODY: That was a little bit of a unique deal.

COWEN: He’s been a guest on this podcast. Cass Sunstein for law. He’s been a guest twice on this podcast. Eric Topol for medicine. I’ve been a guest on his podcast. How do you pick those people? Obviously, they’re highly accomplished, but what makes them good at doing this other than just being smart, productive people?

FOODY: I’ll step back and provide a little bit of context on APEX, or the AI Productivity Index, and why we chose them to help with it. The largest disconnect that we were seeing in AI research is that everyone was focused on academic evals, like GPQA for PhD-level reasoning or IMO for Olympiad math, which were wholly disconnected from the outcomes that customers actually care about, of how do we get the model to automate a medical diagnosis or a legal draft? Or preparing a certain financial analysis of a company.

So, we chose legal experts, medical experts, finance experts — people that have a broad economic perspective to see what is the right methodology to think about measuring success across each of these domains. Working with them on segmenting: What are all the different industries within law? What are all the different types of law? How do we leverage our marketplace of all of these experts to best capture and measure how well models have automated all of those domains?

COWEN: So, it’s because they’ve had real-world experience, and they’re not only academics? Is that the way to think about it?

FOODY: I think that’s part of it. I think a lot of them obviously have meaningful real-world experience, but also this broad vantage point of the entire industry, of not just someone that specializes in a particular type of law or a particular industry in big law, but rather having this very large perspective in how we should structure the project, how we should think about the rigorous processes associated with curating the datasets, setting up the reviews, et cetera.

COWEN: The paper you did with that group of people as your researchers — and many others, I should add — what’s the main thing you all learned from that exercise and from the paper?

FOODY: I think the largest takeaway is that the rate of model improvement at economically valuable tasks is incredible. If you look at the level that GPT-4o scored on this model, a frontier model a year ago, and that against GPT-5 today, the delta is profound. It often gets —

COWEN: Can you put a number on that or somehow break it down for us?

FOODY: Yes, call it a 25 percent–, 30 percent–improvement.

COWEN: Per year?

FOODY: Per year, exactly. Now, GPT-5 is at 64 percent, so maintaining that would definitely be challenging. It gets my mind wondering, what will this technology be able to do in another year or two? And how will that have this profound impact on the economy that so many of us have been wondering about for a while?

COWEN: When you give these numbers, to what extent are you measuring how well they do on the test versus how much economic value are they creating?

FOODY: I’ll walk through the methodology and how we derive that. Essentially, within each industry, we start out with surveys of hundreds of experts. Within consulting, we get experts that were previously at McKinsey, Bain, BCG, and other top consulting firms. Then we survey, how do they spend their time? What percentage of their time is in customer meetings, is in online research, is in analysis, preparing deliverables for customers? Then, within each of those buckets, we ask them to write the corresponding prompts and rubrics associated with how they spend their time.

Using their time is the best proxy we have for the economic value associated with their salary, or what customers are willing to pay for, and it’s incredible to see. The model scoring 64 percent on that is pretty profound. Obviously, there is some complexity in mapping that to economic impact because in certain industries like medicine, you can’t have a 30 percent failure rate. You need to have near-perfects or similar to driverless cars in some ways. In other industries, like an initial legal draft or a consulting analysis, this technology is already starting to have a profound impact, and it’s only accelerating.

COWEN: But isn’t there something about switching from task to task which the models can’t do at all? So, the model would beat me on a test. The model might even run better podcast questions than I do. But somehow, combining those all in a single entity, I can do. Even the best model — it’s still basically at zero as far as I can tell. The economic value is, in a way, still at zero?

FOODY: It’s interesting. I think what you’re getting at is there’re two key things the models struggle at that humans tend to be very good at. The first is these longer horizon tasks of not just something that we could do in a few hours, but something that might take us 50 or 100 hours to do. Then the second thing is integrating multiple tools with our response and going about doing these things, maybe interacting with people as one of those elements. I think that that’s coming very soon. The next version of APEX —

COWEN: What does “very soon” mean? Your best guess.

FOODY: I’ll talk about it in terms of APEX, and then I’ll talk about it in terms of model advancement because there’s a large correlation between the two. We’re doing a lot to measure all of those capabilities and how models interact with the entire workspace, and how models do these very long-horizon tasks in an eval that we’re launching in the next couple of months. Very quickly, once researchers are able to measure those capabilities, they’ll be able to help flag them. I would be shocked if we don’t have enormously capable models across those dimensions of lots of tool use with very long horizon tasks in the next six to twelve months.

COWEN: Let’s just take the body of knowledge alone. Forget about the long horizon. Just an on-the-spot test. Let’s say I’m Cass Sunstein. I know Cass. He has an incredibly impressive body of knowledge in many areas. When are we at the point where, basically, Cass cannot ask a question that the best models cannot answer?

FOODY: Wow, that’s an interesting question. I think it depends domain by domain, but in law, I think it’s going to be a long time. The reason is that there’s so much taste involved in legal responses that effectively getting all of the taste that Cass has into the model is going to be difficult. I do think we’ll very quickly get to the point where Cass has a really hard time finding a mistake the model makes, where he has to spend maybe a week just trying to probe it.

COWEN: How far away is that?

FOODY: That might be about two or three years, it wouldn’t surprise me, for a question and response.

COWEN: I would think it’s six months away, would be my guess.

FOODY: Maybe.

COWEN: If he asked it 1,000 questions, I think he could induce an error. Fifty questions — I think, in less than a year, we might be there.

FOODY: It depends also a little bit on how tightly you define an error. He might have all sorts of knowledge of niche areas of the law that the model isn’t strong at. There’s some question of how you measure this, but I hold Cass in very high regard with respect to his niche knowledge of the law and ability to stump the models.

COWEN: What would be an area where the human expert is relatively strong and an area where the human expert, compared to the model, is relatively weak?

FOODY: There’re interestingly a lot of areas in law where the right way of approaching something is not written down or codified. It exists more in the heads of experts — at least not explicitly. I think it’s those domains where there’s a lot of taste that isn’t well-documented that the models will struggle immensely with, because they either need those tokens in the pre-training data of doing these web-scale training runs. Or they need it in the post-training data, of having a legal expert from us to create those datasets. If they don’t have those, then the model will inevitably struggle with that particular problem.

COWEN: Now, I’ve argued in economics that the leading economics journals should take their referee reports and the submissions and send them somewhere, arguably here. Would that be useful to you?

FOODY: It certainly would. It’s something we’ve talked about a bunch in the past. I think that the largest way that these deep domain experts can help to contribute to the advancement of AI is defining the evals. When we have these phenomenal tests for model capabilities — whether in economics, law, or other domains — it’s amazing how fast the researchers can hill climb them and optimize around them. So, more help in building these tests and sending them to us and other labs is extremely impactful.

COWEN: Those are nonprofits, those institutions. Why don’t they just send it to you now for free? Do you have a theory of this?

FOODY: I’m not sure exactly.

COWEN: It would improve science, right?

FOODY: It would improve science. I think maybe two things. One is awareness of this. I think that while evals are the thing that everyone’s talking about in Silicon Valley and the AI labs, it feels like most people in the rest of the country couldn’t quite describe exactly why you need an eval.

I think the second is a little bit of fear, where everyone worries about how is AI going to impact their jobs, their work, their ability to contribute to the economy, and be meaningful. I think that that’s always top of mind, even for nonprofit organizations that want to contribute and preach this world of abundance.

COWEN: Let’s say we took the live economics or legal, whatever seminars — let’s say the top 10, top 20 schools — recorded them all, somehow anonymized the data, but you had the comments in transcript, and sent that to you. Would that be useful?

FOODY: It would be very useful. One thing I will say, though, is that there are two kinds of data, is a good way of thinking about it. The first kind of data is just the output, where you have some curriculum that the model is reading and learning from.

The second kind of data is some way of measuring success, where you have the rubric for the response, you have the test question and answer, you have the unit test, and code. That second kind of data is the most valuable, where we’re able to have the models attempt the problem many, many times, score those responses, and learn from them, but both are incredibly impactful and things we would love to get support with.

COWEN: On your wish list — just to make this more concrete — you can have some kind of data. Forget about realism, you just get it for free. What is it you most want? Let’s say social sciences, forget about realism.

FOODY: Interesting. Forget about realism, social sciences I think that we tend to focus a lot on what’s economically valuable. If people have tests that the models are bad at, that map to a meaningful amount of economic value — and it could be an academic domain that can be applied to create a lot of value in other areas — that’s super exciting for us. Maybe a good heuristic is, if we could build a model that, without seeing this test and reading through it, could max out the test, how much economic impact would that add? Whatever test is able to measure that the best is most helpful.

Maybe in medicine, it’s a test around how well the model is doing a certain diagnosis in a particularly difficult domain where we think the models can add a ton of impact. Maybe in economics, it’s areas of analysis and modeling of businesses that aren’t well codified but could meaningfully impact the way that we underwrite businesses. Those types of things are what’s going through my head.

COWEN: Let’s say it’s poetry. Let’s say you can get it for free, grab what you want from the known universe. What’s the data that’s going to make the models, working through your company, better at poetry?

FOODY: I think that it’s people that have phenomenal taste of what would users of the end products, users of these frontier models want to see. Someone that understands that when a prompt is given to the model, what is the type of response that people are going to be amazed with? How we define the characteristics of those responses is imperative.

Probably more than just poets that have spent a lot of time in school, we would want people that know how to write work that gets a lot of traction from readers, that gains broad popularity and interest, drives the impact, so to speak, in whatever dimension that we define it within poetry.

COWEN: But what’s the data you want concretely? Is it a tape of them sitting around a table, students come, bring their poems, the person says, “I like this one, here’s why, here’s why not.” Is it that tape or is it written reports? What’s the thing that would come in the mail when you get your wish?

FOODY: The best analog is a rubric. If you have some —

COWEN: A rubric for how to grade?

FOODY: A rubric for how to grade. If the poem evokes this idea that is inevitably going to come up in this prompt or is a characteristic of a really good response, we’ll reward the model a certain amount. If it says this thing, we’ll penalize the model. If it styles the response in this way, we’ll reward it. Those are the types of things, in many ways, very similar to the way that a professor might create a rubric to grade an essay or a poem.

Poetry is definitely a more difficult one because I feel like it’s very unbounded. With a lot of essays that you might grade from your students, it’s a relatively well-scoped prompt where you can probably create a rubric that’s easy to apply to all of them, versus I can only imagine in poetry classes how difficult it is to both create an accurate rubric as well as apply it. The people that are able to do that the best are certainly extremely valuable and exciting.

COWEN: To get all nerdy here, Immanuel Kant in his third critique, Critique of Judgment, said, in essence, taste is that which cannot be captured in a rubric. If the data you want is a rubric and taste is really important, maybe Kant was wrong, but how do I square that whole picture? Is it, by invoking taste, you’re being circular and wishing for a free lunch that comes from outside the model, in a sense?

FOODY: There are other kinds of data they could do if it can’t be captured in a rubric. Another kind is RLHF, where you could have the model generate two responses similar to what you might see in ChatGPT, and then have these people with a lot of taste choose which response they prefer, and do that many times until the model is able to understand their preferences. That could be one way of going about it as well.

COWEN: I’m sure you know these studies where there’re some AI-generated poems and some human-generated poems. Often, the humans prefer the AI-generated poems, even though, to people with “taste,” they’re worse. Whose side do you take there?

FOODY: It depends what you’re optimizing for. I think that generally, we’re in the mindset of, for the power users of these AI products, what are the types of responses that they would want to see and be happy with? But it’s challenging because that sometimes deviates from the types of responses that the top 1 percent of experts in poetry might say is a broadly good poem. So, striking that balance is really up to a lot of the researchers and product leaders at the labs. What do they think good looks like? And how do we act as their partner in defining that?

COWEN: If you could model a much older poet — William Wordsworth, Blake, John Milton, Rilke — some of my friends say there are no truly great poets left anymore. The best poets were way back when. Is it a goal to model the older poets and figure out what they would think, and rather than having Larry Summers and Cass Sunstein come in, that you have some AI-generated model of John Milton?

FOODY: Maybe. [laughs] I will say it ties back to the goal of APEX, which is that we saw people were too focused on a lot of these purely academic domains and not focusing enough on how will people actually use the models and the economy. But I certainly do think that, especially as we start to automate more industries and there’re more liberal arts and these kinds of domains where people want to spend time on poetry, certainly building the tools to help them create phenomenal poems and make them happy and their readers happy is definitely the way we would go about it. I’m not sure if it would be using the archetypes of these former poets. How would you go about it, Tyler?

COWEN: I don’t know. I don’t trust contemporary poets, frankly. There aren’t many of them I like to read. Maybe Geoffrey Hill would be one. Some are too postmodern. Some maybe are too woke. Some are too identity-driven. I love older poetry, so it’s not that I don’t like poetry. I worry about putting them — they’re not quite in charge, I get that, but giving them so much leeway.

FOODY: It does evoke this really interesting idea of how we want to teach models and measure the success of these models. Is it via consensus? Is it via a handful of the top experts in that given domain? There’s really no correct answer. I think that different AI labs, different researchers will go down different routes, and that will frame the ways that these products feel and the things that they ultimately achieve.

COWEN: Maybe we should only enshrine the current age when the current age is at a peak. Scott Sumner says the best movies were maybe made in the 1960s and ’70s. Whether or not you agree, you could have movie evaluators be only from that time. There are some still alive. If you think the best heavy metal, say, comes from the 1980s, well, you wouldn’t have the current evaluators. You would pick evaluators from the ’80s.

The best poetry does seem, by most people’s standards, to be really quite old, and we can’t resurrect those individuals. But the notion that you enshrine current taste when taste changes so much — it’s a very interesting decision.

FOODY: It certainly is. My guess is that in a long enough time horizon, we’ll enshrine taste from every different decade and every different era. Then the model will be able to learn what taste do you have and how does it pull on each of those knowledge bases to best personalize it to your preferences.

COWEN: How much of society, ideally, should become a big reinforcement learning machine? We tape everyone, everything, every debate people have over the coffee table.

FOODY: I think it will become an immense amount very quickly. There’s obviously still going to be the personal conversations over the coffee table that people don’t want recorded. But my firm belief is, especially for economically valuable tasks, we’ll move towards a world where people do things once.

Instead of the investment banker redundantly analyzing a data room to prepare an analysis of a company every couple of weeks for a new project and a new customer, they’ll teach the model how to do that once in the particular domains that they operate in. Similar to building software once, they’ll be able to use that many times as they use their agent. Instead of the customer support rep monotonously responding to tickets every day, they’ll find the mistake that the agent makes. They’ll turn that into an RL environment, and then, all of a sudden, the agent will be able to solve that problem many times.

I think, in many ways, the economic incentives and how knowledge work will change has a lot of similarities to software, and that we’ll move towards these fixed-cost investments of teaching an agent how to do something, building an RL environment for something, and then being able to use agents as many times as we want to perform that activity. That’s why I believe that a huge portion of the economy will become an RL environment machine.

COWEN: Do you think pendants or Meta-like glasses will be more important than that? Are we going to do both?

FOODY: I think a lot of both.

COWEN: If I take myself, I don’t do that much small talk. Say you attach the little pendant to me, and you got the tape of all my conversations, you could feed it in. What’s the social value of that? Is it $5, $50, a bit more? How valuable is that?

FOODY: It certainly depends a lot by person. I would imagine yours are quite valuable.

COWEN: Quite? How much would you —

FOODY: Could be a good side hustle.

[laughter]

COWEN: I’m not asking for an offer, but how much, actually, would you pay?

FOODY: I would pay a lot just out of pure curiosity. If I were trying to think about how valuable it would be to our customers and our business, I imagine it would be something in the order of — it’s hard because it changes over time — certainly tens of thousands, if not many hundreds of thousands of dollars a year, and how that evolves over time.

But my guess is that for the vast majority of people, they’ll still care a lot about privacy, so maybe that data will be collected to personalize their individual agent, but they’re not going to be comfortable with that getting added to the broader model weights to customize the base model that billions of users are —

COWEN: But that’s easy. You can tape me with my pendant. I run it through my AI, and I say, “Take out anything I don’t want Mercor to hear.” And it will do that quite well, maybe not perfectly. Then you get what’s left over: all the debates about elasticities and tax incidence.

FOODY: Maybe. I suspect you’re probably more comfortable with it than most people. [laughs] Most people would probably say, “Well, you’re asking the AI to be the layer of trust to remove the sensitive information, but it’s going to have a bias in doing so.”

I think there’s always going to be some level of sensitivity around these topics. I actually believe that some of the companies that have done a very good job around their brand of privacy are going to have an advantage in it. Apple — while maybe not totally at the frontier of AI yet — has done such a good job in their brand around privacy. That’s going to allow them to have a lot of trust from users in a way that they’re able to collect all of this personalized information.

COWEN: Let’s say three to five years out, when the top models will be both clearly better than virtually all human experts or maybe all human experts and recognized as such — the latter we certainly don’t have — what do you think, in that world, the reputation of expertise is like?

One view is no one respects the experts because the machines are better, but I think an alternative possibility is the machine, by not being tied to a personality, is less disliked. People actually respect the experts more because they get this impersonal distillation of the experts. It’s like, “Oh, the experts did that. They’re so amazing. They’re not annoying me like on the late-night TV show.” What will happen to the status of human experts?

FOODY: I think so. I think that I definitely am already at the point where there are certain domains where I trust ChatGPT or whatever model I’m using more than I trust a particular expert in that industry for a very quick medical perspective even in some cases, or whatever it is. I think that there’s some element of it being highly competent. There’s some element of it not having a face to it that causes us to place this high trust.

But I do think that the point you made at the beginning is around evoking the question of what is the point at which these models will be able to do everything that experts aren’t able to do. My read on the market is that models are advancing very, very quickly in being able to automate — call it 50 percent or 75 percent of what humans and experts are able to do — but will really struggle with that last 25 percent. I think that for a very long time, human expertise will be imperative to help accomplish that last 25 percent as the ultimate bottleneck to more economic prosperity and productivity.

COWEN: How long until the best models can write a poem as good as the median Pablo Neruda poem?

FOODY: Oh, I think that’s probably not too far off. I think the models —

COWEN: I would say less than a year. When you say —

FOODY: Yes, less than a year.

COWEN: Less than a year. How about the very best Pablo Neruda poems?

FOODY: I’m not too calibrated on poetry, so I’d have a hard time saying.

COWEN: I think it’s much further out. Is that your intuition?

FOODY: I agree. I think that’s consistent with my intuition as well. I think that this longer tail of advancement is generally the most difficult.

The other heuristic I have for it is that, going back to this dimension of the time horizon of the task, models are in some ways superhuman with what you can do in a chat window with your chatbot, but they still can’t draft an email for us. They still can’t schedule a meeting. Those things will come, but I think that there’s a long way before we’re able to tell a model, “Go off and build a startup for 90 days.” There’s going to be an immense amount of human expertise associated with how do we get to that across every knowledge work vertical that we want the models to operate in?

COWEN: Insofar as we turn society into this big engine for reinforcement learning, what new jobs get created by doing that?

FOODY: I think the most interesting part of our business is that everyone else in Silicon Valley is talking about how we automate away jobs, versus we’re very focused on how do we build this new job category of people training agents, building RL environments to help teach models. That’s what I believe it’ll converge to. Instead of the investment bankers doing the analysis, they’ll build RL environments and train agents. It’ll be the same across consulting and software engineers, and customer support and pretty much every knowledge work vertical.

It’s hard to say the exact pace at which that’ll happen, but I would not be surprised if, within five years, a majority of high-end knowledge workers are training models, whether in their full-time jobs or through our marketplace, to help improve agents at whatever workflows they want to automate.

COWEN: To hold those jobs, how much technical AI will a person need to have? Or do they just have to know about the thing?

FOODY: They just need to know about the thing. The only element of technical AI that they’ll need is to find where the model makes a mistake. As long as they can find where the model makes a mistake and understand, in some ways, the frontier of the model and its capabilities — how you can push it to its limit — then it’s relatively easy to create some criteria or way of measuring that mistake so that the model can learn from it.

I think we’ll have that across every different vertical, with every different tool, with these very long horizons, whether it’s 100 hours or 100 days that we want the model to work on something. That’s going to very quickly become the primary bottleneck to model improvement.

COWEN: Is the demand for software price elastic?

FOODY: I think it’s extremely price elastic. In fact, I think that the elasticity is the exact right thing to home in on with respect to how job displacement will evolve in these domains. I think if we make software engineers 10 times more efficient, we’ll have even more software engineers. Maybe we’ll have 10 times as many software engineers and build 100 times as much software, right? Versus other domains, maybe that’s not the case. Maybe we only need so much accounting in the world, or we only need so much customer support, but I think software engineers certainly will be able to do so much more.

COWEN: Where else do you think of as price elastic?

FOODY: I think that building businesses is also. A lot of the product and distribution associated with software is certainly going to be something we see a lot more of. I think there’re a lot of domains. Even if you think about investing — obviously, it’s not as price elastic as software, but I do think that there’s still enormous inefficiency with respect to how we allocate capital in the economy.

If I think back to the early days of Mercor, we were having a hard time getting our $10,000 of working capital for our initial seed investments. Then very quickly, once you get to a reasonable scale, the markets are very, very capitalized. I think a lot of this early capital allocation, as well as even just better understanding how companies will develop over time, is going to be really interesting.

Also, how that information and analysis manifests itself within companies. For an operator — they have this investing problem of what are all the different bets that they have within their companies? How do they allocate capital and resources associated with that? I think that there’s so much elasticity with respect to how we build more products, how we distribute those products, and how we allocate resources within companies more effectively.

COWEN: What will education look like five to ten years out?

FOODY: I think education is one of the things I’m most excited about, where a good heuristic is if everyone has Sal Khan as their personal tutor, available 24/7 to teach them whatever topic they want to learn. It’ll be that it’s much easier to motivate themselves. It’s much better access to information, much better ways of explaining that information, and that’ll be profoundly impactful.

COWEN: But that seems less price elastic, right?

FOODY: Yes.

COWEN: Only so many hours of Sal Khan a day, no slight intended to him. But it’s not going to be 27 hours a day, right?

FOODY: That’s true. That’s true.

COWEN: Employment for teachers, researchers might shrink?

FOODY: I think in some ways, areas of that may shrink. But I also think that there’s a large element to teaching that exists in personal relationships, of which the model will be able to do part, but not all of it, of how does the teacher act as guiding the student through their journey and helping them to improve both in their curriculum as well as their emotional development. So, I think teachers will still play an important role in the economy, ideally, able to just provide higher touch points of contact with all the students in smaller class settings.

COWEN: This is October 2025. How many people work at Mercor?

FOODY: Right now, we have just over 300 people across the world as our full-time employees.

COWEN: How did you hire so many good people so quickly?

FOODY: We used our technology and our platform to help with it a bunch. The origin story of the company was automating all of the ways that we would review resumés, conduct interviews, and decide who to hire. The ways that we assess talent, the ways that we optimize funnels to build out teams, is really ingrained in the DNA of the company and a top priority of me and my co-founders. I’m extremely grateful for everyone that we have on the team, and they make it look easy.

COWEN: How do other people do interviews wrong?

FOODY: This is something we’ve talked about a bunch because you obviously wrote a phenomenal book on talent. I think one of the largest problems that people make is that they don’t measure the actual skills and capabilities that they want someone to exhibit on the job. Instead of focusing on how do we measure how well this person does their investment analysis of the data room, they have this vibes-based conversation of where did the person grow up, how similar are they, do they think they would enjoy hanging out together.

Obviously, that’s still important if you’re having a working relationship, but I think that they often over-index on that relative to the skills that people actually exhibit.

COWEN: Just give them a project and grade them, in essence.

FOODY: I think that’s the cleanest way to do it.

COWEN: Let’s say it’s not programming. As the company gets bigger — the major AI companies — a lot of them now are quite large, and most of the people who work there don’t do AI at all. They do jobs that are not so dissimilar from what they might do at Coca-Cola, which is fine. It’s just part of growth. They’re legal, they’re communications, they do events, whatever. When you’re trying to hire people like that, say, what’s the test? What’s the project, or what is it you look for?

FOODY: I think that’s definitely more difficult. You probably want to look for cases in their life where they’ve worked in similar roles because you can’t curate a project that’s as similar to exactly what they’ve done. You would see the best proxy for that, and then really drill in to understand the details of that working environment, how similar it is, how well they performed in that, talking to people that previously worked with them in that environment to get a gauge for it.

It definitely is more difficult to measure someone’s slope and how they’ll develop on the job over a six-month time horizon than it is to measure their Y-intercept. I think that’s one trend that we’ve found in talent assessment.

COWEN: Do you think body language in an interview is predictive?

FOODY: I think it can be, but I also think it can be a false signal because I’ve definitely had cases where I over-index on, “Oh, this person feels a little bit awkward,” or whatever it is, but they do a phenomenal job at the actual work. So, I think it’s important to be very cautious around which of these signals are actually correlated with performance and which ones aren’t.

COWEN: Articulateness — overrated or underrated?

FOODY: It depends a lot on the job. [laughter] It depends a lot on the job.

COWEN: Let’s say 10 years from now, when we can really measure pretty well the performance of people we’re interviewing today — less than 10 years, but let’s say 10 years. Let’s say you have a company, such as Amazon, that does a very large number of interviews. Let’s say they’re all taped, and you run them through the best AI models. How good a predictor do you think that will be, in your opinion?

FOODY: I think that it will be certainly superhuman because humans aren’t very good at it, but it’s still such a difficult problem that there’s going to be variance. For roles like the one you described, what’s going through my head is there’re a lot of confounding variables. Did the person have an issue in their family that caused them to be off their game or not show up to work? Did they get sick during the interview process and maybe weren’t full of energy?

There’re all these things that just add noise to that problem, but I do believe that, as we’re able to get all of that data in context, to have all the notes from the manager around what was happening in this person’s life, both during the interview process as well as on the job, that will allow it to, over time, become phenomenal. Maybe we have that on a 10-year time horizon.

COWEN: How can we make labor markets more efficient?

FOODY: I think that one of the largest inefficiencies in labor markets is that everything is disaggregated, and that when one of our friends is applying to a job, they would apply to a couple dozen jobs. When a company is considering who to hire, they’ll consider a fraction of a percent of people in the economy. It feels like there needs to be a structural change there, where there’s an aggregator that everyone applies to, and every company hires from facilitating this perfect flow of information.

COWEN: We need a very good AI for that to work?

FOODY: I think a very good AI will help with that working. The reason I think it doesn’t happen today is that there’s a very difficult matching problem. Let me give LinkedIn as an analog. LinkedIn has all the distribution to pretty much every company and every candidate, but at the very same time, it’s incredibly difficult to understand, based on someone’s LinkedIn profile, whether they’ll actually perform well at a given job.

I believe that, in that case, it’s very much a matching problem — less so a distribution and aggregation problem — to facilitate this effective flow of information and aggregation within knowledge markets.

But I think it’s also in line with the fact that the nature of jobs is changing dramatically. Previously, everyone would think about this problem in the context of full-time roles, but as we trend towards this world of everyone building our RL environments, and being able to do work remotely, and train models in this fractional way, that also will shift the dynamics of enabling more aggregation, enabling more globalized matching, and how that will impact the economy.

COWEN: Some of my friends think that mentors and nepotism will make a good comeback. They say everyone will submit a perfect cover letter, have an optimized LinkedIn profile. They’ll even have practiced with an AI doing the interview. They won’t all get up to speed, but a lot of them will, and there’ll be this large mass of apparently pretty qualified candidates. What you’ll actually do is resort to the old tried and true. Do you know this guy’s uncle, or someone else who can recommend them? Agree? Disagree?

FOODY: I think in some companies and industries, that will happen. I agree with it. My hope is that we have models that are helping to run companies in a very thoughtful, efficient way that are data-driven about it, where the models have an eval set of all the performance reviews of people in that given company, and they’re able to make an accurate prediction over whether this reference, or that piece of nepotism should actually be considered, or maybe as a countersignal. That’s my hope, but it’ll probably play out with some combination of both over time.

COWEN: In the AI-run labor market, let’s say, it’s more efficient, but do you think there are fewer second chances, and late bloomers in that world? You get scored too early, so to speak, and then you’re tracked. It’s a bit more like how European schooling systems can differ from American.

FOODY: I think there will be a lot of second chances, and the reason that there will be is that oftentimes they’re effective. The models will identify that, and realize that maybe someone wasn’t the right fit for that first role. There’s another role that they could be a really good fit for. I do think that there are jobs in the economy that almost everyone would excel at, and it’s really just this matching problem of finding the intersection of something that they’re excited about where they’ll also add an immense amount of economic value.

COWEN: As you know, there are AI services now. You’re doing an interview across the top or the bottom of your screen. The AI can give you advice, answers. Does that work at all? What do you think of those?

FOODY: We run up against a lot of those. One thing I’ve found in talent assessment is that, initially, people tried to work against AI, similar to what we do in academic settings, where people would try to say, “We’re going to have you write the essay on paper so that you’re not able to use ChatGPT to help you with the essay.” Really, the right way of approaching it is seeing what people can do when using all of those tools.

If we tell them, “Hey, use all of these phenomenal Codegen tools, and record your screen in building a product to see what you’re able to do over the course of an hour,” that’s a far better predictor of this person’s ability to actually deliver impact than it is to say, “Don’t use the tools at all.” So, I think that’s one shift that we’re going to see, and will likely frame the relevancy of a lot of these AI cheating tools over the coming few years.

COWEN: Can someone fool you by using an AI cheating tool, or do you feel you more or less always know?

FOODY: I think that there were cases where people could fool us, but now we’re quite good at figuring it out. [laughs] We’re quite good at figuring it out and also moving towards assessments where we almost encourage it, and are comfortable with the fact that they’re using these tools because we want to see what they’re able to do with them.

COWEN: You were a Thiel Fellow, and you dropped out. How could they improve their methods?

FOODY: Well, [laughs] this is something we’ve talked with them a lot about because the Thiel Fellowship is constrained by that exact matching problem that we were talking about earlier, where they can only consider, and interview a fraction of a percent of the people in the world that they think would be a good fit for the fellowship. So, we’ve worked with them on building out AI interviews that are able to better assess Thiel Fellows, and using models to analyze the transcripts of those recordings to see what are the signals to better select Thiel Fellows, and all of that, which I find very interesting.

COWEN: But isn’t it part of their strength? Say, Peter — he’s quite controversial, politically and otherwise. Being a Thiel Fellow has a certain brand that’s distinct from anything political, but it’s a very particular thing. Not everyone wants to do it. Doesn’t it work well because it’s an extremely local market, and you get people with a certain orneriness, and selecting from that pool just goes pretty well, and you don’t want to be in the bigger pool of people?

FOODY: I think that you’re right in the element that referrals are very important. Oftentimes, great people know great people, and so they’ll always need to leverage referrals.

But at the same time, I think they rightfully care a lot about people that think unconventionally and come from unconventional backgrounds — the people from every part of the world that might otherwise not get a meeting with a venture capitalist or some of these more traditional institutions, and so, ensuring that they’re able to consider those candidates, and give them this opportunity, and incorporate them into the fellowship is incredibly important, and part of the mission.

COWEN: Could it be scaled 10X?

FOODY: Absolutely.

COWEN: 100X?

FOODY: I think so.

COWEN: 100,000X? [laughs]

FOODY: I think it ties to what we were talking about earlier, of the elasticity of demand for better investors, because in some ways, hiring has so much overlap with investing. Imagine if we could have Peter interview everyone in the world when they’re 18 or 20, or whatever the age is, and make a decision around whether he wants to give them a 100K check. That would probably be very powerful with respect to economic mobility and how many companies we’re able to create. I think that will happen. It’s just a matter of time of building the right technology and the right focus to enable it.

COWEN: Is the following possible? Let’s say Peter is just a tremendous interviewer — that’s easy to believe — but he’s really a great interviewer for the subset of people attracted to him. If you just put him out in the broader pool, who’s going to be a lifeguard at the swimming pool, or something? Maybe he’s just not that good an interviewer for that.

FOODY: I agree with that. I think that’s certainly the case. Imagine if you had a panel of domain experts across every industry that were able to perform these interviews. Certainly, the best models will be better than any single best individual, but I would expect that the aggregate sum of all experts in each domain will likely remain better than the models for a long time.

COWEN: Now, you dropped out of school. Now, you’re doing the company. Obviously, you’re very busy, but imagine, as an act of magic, you could have a free year just inserted between today and tomorrow, and you come back and nothing has changed. To go off and do anything you want with literature, with art, with travel, with music, climbing the Alps, I don’t know. What would you do with the year?

FOODY: That’s a fascinating question. Can it be AI-related?

COWEN: No.

[laughter]

COWEN: Cannot be company-related.

FOODY: Let’s see. I would love to travel. I think that sounds like it would be a lot of fun because, as you can imagine, in running the company, I’ve worked a hundred hours a week for the last three years, and I love doing it, and I’ll continue doing that.

But I do think that seeing the world, and getting more of this understanding of how do perspectives vary by country and geography, how are people thinking about AI differently elsewhere is really interesting. I really like that. I remember after ChatGPT came out, Sam did this world tour of going to all the different places, seeing what they thought about AI, how they viewed it impacting their world. I think that global perspective is incredibly valuable, and informative.

COWEN: Where do you want to go the most?

FOODY: I want to go to Japan a lot. I’ve never been to Japan, so I’ll have to make it out there. That’s probably my top pick.

COWEN: It’s a great visit. One thing I’ve found since I have traveled a lot — obviously, I’m older, and in some ways, less busy than you are — that it helps me interview quite a bit, because people more and more come from all over. It’s like if your model has the poetic taste of different eras — of John Milton, Wordsworth, Shakespeare, whatever — traveling is an individual’s version to get some version of that.

FOODY: Yes.

COWEN: Say you hire a lot of people from India. I suspect you do. It’s a populous country, a lot in the Bay Area. Going to India then becomes very important because you get a better sense of just where they’re coming from.

FOODY: Yes, I completely agree. I think also being able to connect with those individuals very quickly around “Hey, I’ve been to this place. I’m very familiar with India, and all these different things” is really helpful in building relationships and setting up trust across all the different people that we work with and interact with.

COWEN: How did your 8th-grade donut company go?

FOODY: [laughs] One of my favorite topics. I can tell the story, which is, I initially realized that Safeway donuts were selling for $5 a dozen. My 8th-grade mind was thinking that is such a deal. I would pay $2 a donut, and I bet my friends would as well. So, I would bike down to Safeway, and I would buy Safeway donuts for $5 a dozen, go to my middle school, and sell them for $2 each.

Eventually, my middle school called me into the principal’s office to shut me down, because I was scaling up my operations. Then I moved my donut stand about 50 feet off of school campus so they couldn’t police me. I paid my mom $20 a week to drive me in her minivan to be able to bring more donuts from Safeway.

COWEN: She charged you $20, your mom?

FOODY: She charged me $20, exactly.

COWEN: Was that underpriced or overpriced?

FOODY: [laughs] I think it was about right. I anchored it on the cost of an Uber. I was like, I’m not going to pay more than an Uber, but I need the car to wait long enough that I’m able to load up 10 or 20 dozen donuts, so I did this. I’d pay my friends in donuts because I perceived the cost of the donuts as my cost basis versus they perceived it as $2 each. I had a little bit of arbitrage in the salaries.

I had competition pop up, where they would sell Chuck’s Donuts, which are higher-end donuts, but they had a $1 cost basis. I dropped my prices to $1 for two weeks to drive them out of business before I had learned anything about [laughs] anti-competitive laws. Those were just a few of the stories from my 8th-grade “donut dynasty” is what we called it.

COWEN: Other than just intelligence, what makes a person good at extemporaneous speaking? You won awards for this, right?

FOODY: I did. Actually, I won awards for it, but I wasn’t nearly as good as my co-founders. In high school, we all did speech and debate together.

COWEN: You knew each other from high school.

FOODY: We knew each other from high school.

COWEN: Like age 14, right?

FOODY: Age 14, exactly. We were on the policy debate team together. We also did national extemporaneous speaking. They were the winningest speech and debate team of all time in policy debate, the most competitive event, where they won the Tournament of Champions, the National Speech and Debate Association, and NDCA — the three largest national tournaments, which no other team has ever done. I did okay, but I’m dyslexic, and so I [laughs] would always stumble over words, or mix things up. I wasn’t quite the same level as them.

I think there’re a few things that go into the answer of what makes one phenomenal at it. I think that high clarity of thought often correlates very strongly with people that speak very well, and so, as you mentioned, intelligence plays another role. I think a second thing is confidence. Someone that’s willing to speak and improve and iterate on it, because oftentimes, it’s just doing more of that activity that allows you to improve on it.

Then maybe a third one is, more than just intelligence, it’s also the speed of thought. I think about those as different dimensions. There are certain people I think of as having very high aptitude, but thinking very deeply and slowly about a given thing. Other people that I think of as having reasonably high aptitude, or medium aptitude, but being able to be quick on their feet. I definitely think there’s some innate element of that.

COWEN: Which are you?

FOODY: I tend to think I’m more in this slower, deeper thinking bucket, but it depends a little bit on how much coffee I’ve had. [laughs]

COWEN: You started the company when you were 19. Why is it there’s a positive statistical correlation between being dyslexic and entrepreneurship? There is one in some published papers. What’s the mechanism?

FOODY: It’s shockingly strong, actually. I’m not sure exactly, but I find that one unique thing is that it feels like my brain works a little bit differently, and that there are certain things that people are so much better at than I am, where they’re reading through evidence in a debate round very quickly, and I could never do that.

But there are certain ideas or ways of approaching a problem that are just different, that enable more creativity, potentially being unconventional in doing so. I think that that is one advantage I’ve had. One of our early investors, actually, Scott Sandell, is dyslexic, and has backed a lot of dyslexic entrepreneurs, and so we’ve talked about this a little bit.

COWEN: One of my hypotheses is that quite early on, you have to learn how to delegate. That’s a skill that when people are not forced to learn, often very competent people don’t become good at it until much later, but a dyslexic person is good at it right away.

FOODY: Totally. Yes, asking people to help read something for them. [laughs]

COWEN: That’s right. “Could you please do this for me?”

FOODY: That certainly could be the case.

COWEN: And focusing on bigger picture in some useful ways, at least for being a founder. Not good for every job, of course.

FOODY: Totally. One thing I really came to appreciate, especially during high school, is that there are certain things that some people are phenomenal at, and others are horrible at. I felt areas of debate in reading through evidence quickly where I felt extremely unintelligent, and it was super humbling. So much of finding success in your career is just understanding what are your strengths, and how do you leverage those, and much less about what are your weaknesses.

That’s something that I’ve taken with how I approach Mercor, but also how I encourage our employees to think about their roles within the business. What are the things where they have these comparative advantages and phenomenal strengths, and how do they leverage those most effectively.

COWEN: How much do you feel you’re in touch with the general culture of intelligent 22-year-old men in the United States? Or are you just so in the company you have no idea what’s going on?

FOODY: I’m so in the company, I don’t know. I obviously was in college for a couple of years before I dropped out, and so I had some people around me. So much of our company is 22 plus or minus a couple of years, so I guess I have that heuristic. I certainly don’t think that I have spent as much time with people my age as if I had stayed in school, as another comparison.

COWEN: This is not a question about you, because we don’t ask personal questions, but a good tech friend of mine — you’ve probably heard of him — he says men in that age bracket, 22, 23, that there truly is a dating crisis, that something has gone wrong. Not about you, but just America in general, the very smart, possibly nerdy person in that age group. Is there a dating crisis?

FOODY: I think certainly in San Francisco. Not in New York, [laughs] but certainly in San Francisco.

COWEN: You think it’s just gender imbalance? Or the country is screwed up more generally?

FOODY: I haven’t thought too much about this. I think it’s probably gender imbalance in San Francisco, especially in certain industries. I think that dating apps are probably, generally in society — I don’t use dating apps — but are, generally, in society helping to drive a lot more efficiency in solving this matching problem.

COWEN: So, you’re pro dating app. Most of the people I know are against them.

FOODY: No, I think they’re good. I’m very much a proponent of better technology to solve these matching problems and enable people to be happy in their lives.

COWEN: Your last name is Foody. Should I believe in nominative determinism? Are you a foodie?

FOODY: I certainly love my diet and good food. It’s funny. My dad always loved cooking growing up, and was certainly much more of a foodie than I am, but a little bit of it rubbed off on me. While I’m not as much into cooking, I love eating good food.

COWEN: Where in San Francisco should people eat? Or nearby?

FOODY: Lots of good restaurants. There are the everyday restaurants that I think are very good, and then higher-end. Every day I love Mexican food. El Metate is a great Mexican restaurant. For higher end food, I like Cotogna in California, Quince, lots of good restaurants like that.

COWEN: At the meta level, what’s the thing people should know about eating out here? Where I live, I would just say you need to know to go to the suburbs. It may or may not be true here, but here, what do they need to know, other than particular names of restaurants?

FOODY: I find that Beli is really accurate, the app for food ratings in San Francisco, because there’s a high density of users. So, if you use Beli as your guide, you’ll generally find good spots.

COWEN: Why is the company called Mercor?

FOODY: Mercor means marketplace in Latin, and we want to build the largest marketplace in the world, so we named it Mercor.

COWEN: We’re from Mercatus. Do you know what Mercatus means in Latin? It’s a variant. It means market.

FOODY: Okay, there you go.

COWEN: Yes, we’re from the same named institution, in that sense.

FOODY: [laughs] Exactly. Well, it’s funny. In high school, my co-founders and I went to a Jesuit school, and my co-founder, Surya, studied Latin, and so we’ve always certainly thought a lot about Latin roots and Latin words.

COWEN: Your family wasn’t Catholic, I believe, right?

FOODY: That’s correct.

COWEN: Did going to a Jesuit school help you think? Or what did that add to the mix?

FOODY: Well, none of the three of us were Catholic despite going to Catholic school, which was a little bit funny. One [laughs] interesting story is that my mom was concerned about whether I would start selling drugs when I was doing my donut stand in 8th grade, because it’s an easy step. I like to think that Catholic school helped instill good values in what I should care about, and being very focused on school at the time, on speech and debate, on building companies, and so, very grateful for that education.

COWEN: Last two questions. First, what’s the next goal you have for the company?

FOODY: The next goal for the company is really in scaling up a lot of these super-realistic evaluations that I’ve talked about, of how do we measure the ways that models use all sorts of different tools on trajectories that would take someone days or weeks to do is a big focus for us, and especially how that impacts enterprise, right?

For the last two years, people have been very focused on the idea of intelligence rather than the idea of models being useful. Bridging the gap between what do enterprises actually want to use, how do we measure that, and how do we get those capabilities and models is, to me, the most exciting thing that I could work on.

COWEN: What do you want to learn next, work-related or otherwise?

FOODY: That’s an interesting question. I feel like Mercor is at the intersection of labor markets and AI research. We grew up with the DNA in labor markets, of thinking all about how do we aggregate all these people on our platform? How do we match them? We hired people that are deep domain experts in labor markets like Sundeep Jain, who was the chief product officer, chief technology officer at Uber.

But I am most fascinated by all the advancements in AI research, of how do we apply human talent and human labor to all of these problems at the frontier, and more efficient ways to train models, and what are the specific rubrics or data types that are driving the most model improvement. I’ve been most interested in how to learn that.

COWEN: Brendan Foody, thank you very much.

FOODY: Thank you so much for having me, Tyler.